Reclaiming Gigabytes From Docker With One Command

In my previous blogpost I installed Portainer in Docker Compose. It’s a great graphical user interface for managing Docker containers and images. While exploring Portainer I noticed something strange: I had a lot of unused images! What happened?

This is my story of how I reclaimed almost 200GB of data, just from deleting unused Docker images.

There are a few scenario’s when a Docker image can be unused. For a selfhoster, it will most likely be an container which now uses an updated image and the old one is still there. That image is considered unused.

Another scenario in which an image is unused, is when it has no tags (and is not used by a container). This can happen when you are building your own container, but have not given it a name and are not used by another image. This is called a dangling image and is a little different from an unused image.

The images itself do not consume any resources on your system, except for storage space. Unused images can use quite a lot of storage space over time, so it is adviced to clear them out once in a while.

You might want to keep a few of the old images around though. This can be useful if you ever have a faulthy image and want to rollback to a previous version.

I failed to clean up the old images every once in a while. Docker was growing and growing in size and I didn’t really notice it (I have plenty of storage left on my NAS). It was until I installed Portainer that I noticed the amount of unused images… Yikes!

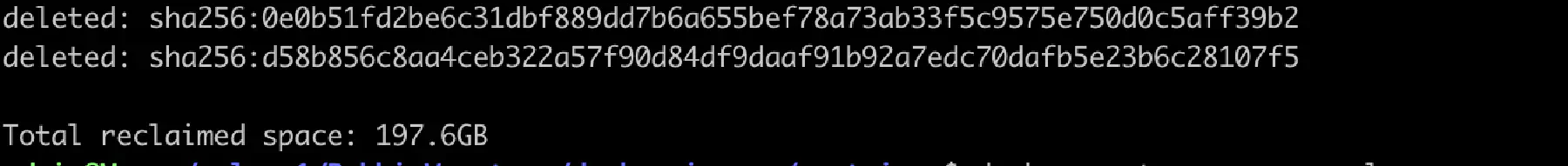

I ran the docker system prune -a command for the first time and it took quite a while… How much did I actually waste on used stuff?

Almost 200GB! Whoops! Time to make sure that doesn’t happen again.

I plan to automatically clean up my docker images. In almost every system, it is possible to have a scheduled task. On (most) Linux based systems, you can use cron for this.

First off: we need to be root to make changes to the crontab file. You can do this by issuing the following command:

$ sudo -i

Password:Type in your user password and you should now be the root user. Next, we need to edit the crontab file. I use vi for this, but if you have nano available you can use this too:

$ vi /etc/crontabWe need to add a new line to this file. I plan to run this script once every day at 20:00. The line we need to add will look like this:

0 20 * * * root docker system prune -f --filter until=72hFor those who don’t know cron, this can look a little daunting, but it is actually very easy.

The first five “numbers” are for the minute, hour, day of month, month and day of the week. A * means “every” in this context. In the above example, the script will be run on 20:00, every day of the month, every month and every day of the week.

After the first 5 items, the 6th item is which user the command will run, in this case root. This can be helpful to run scripts as non-root if you’d like to.

Everything after the user is the command you want to actually execute. In this case it’s docker system prune -f --filter until=72h. This will prune all unused networks, images, stopped containers and the build cache older then 72 hours. The -f is needed, because normally Docker will ask you to proceed; this makes sure you won’t get that prompt.

It is important to monitor your servers and try to find oddities. Checking the resource usage is a good indicator if something is off, but it is important to have proper monitoring tools available. I will probably explore more of this in the future, so stay tuned for that!

Do you have any valuable lessons learned? Share them in the comments below!